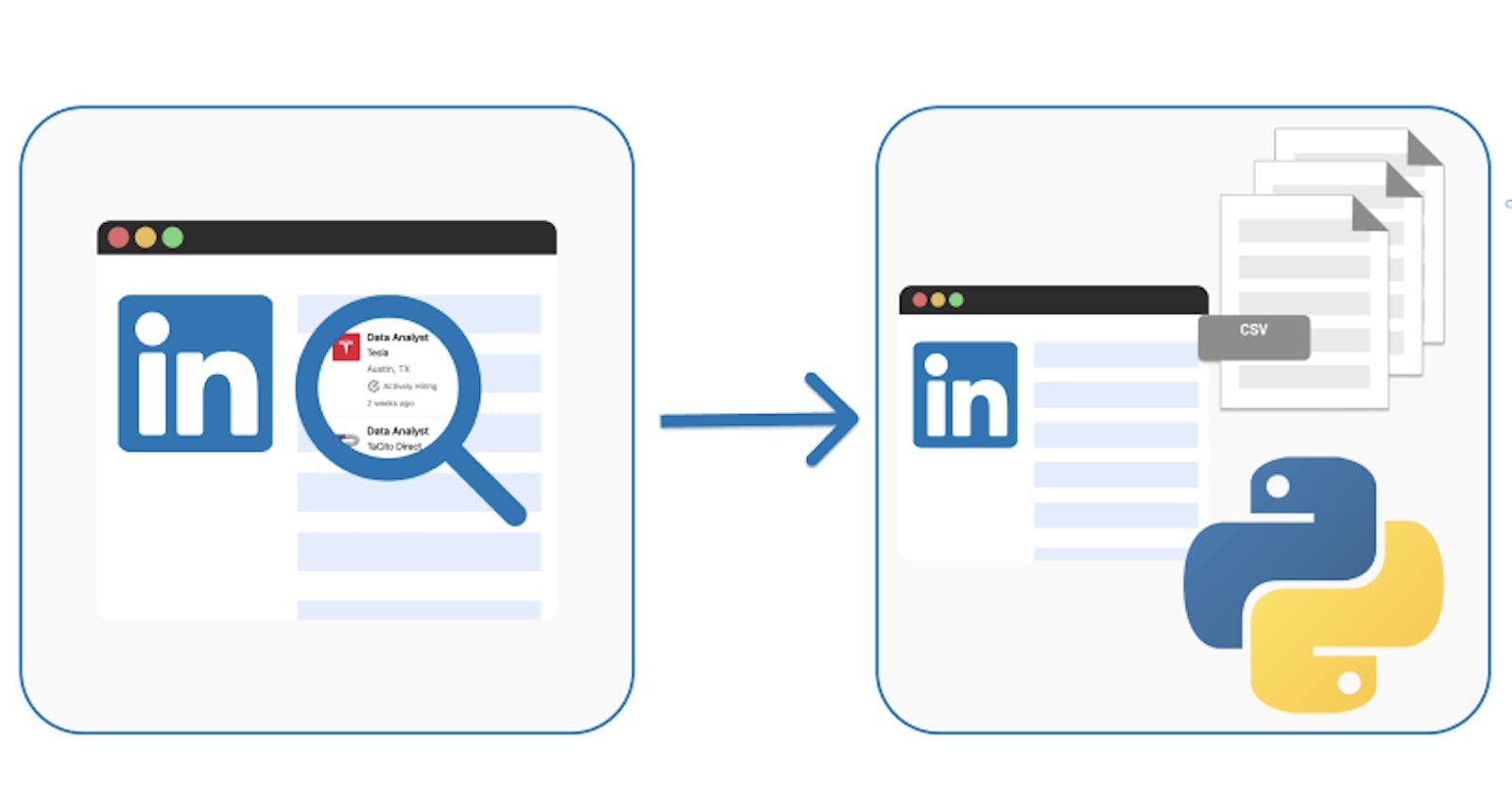

Building your own LinkedIn Profile Scraper

Allow the machine handle the heavy lifting.

Scraping is a computer technique for retrieving information from a web page and reusing it in another context.

Using bots to retrieve and extract information and content from a website is known as "web scraping." Web scrapers are programs that search databases for information by using software, scripts, and other methods.

Why Scrape Linkedin ❓

As was already indicated, scraping can be a helpful tool for extracting information from a website. Online data can be retrieved by scraping. For instance, you can utilize LinkedIn's enormous user base of more than 828 million members by gathering data from its members' public profiles...

These members' profiles can then be queried in an excel or CSV file, allowing you to undertake operations that are specific to your needs. For instance, I discovered that it may be quite time-consuming to search through a ton of recruiter profiles in an effort to find any information, such as emails, contact information, and more. As a result, I considered how I could use Python to help automate this process. We all have different reasons for doing things, and yours might include gathering contact information for people who work at a company you'd love to work for and obtaining their emails so you can set up phone calls and start a conversation, among other things.

People have a misperception about scraping that makes it appear criminal; nonetheless, web scraping is completely legal. Although it has drawbacks, those won't be covered in this post; instead, you'll learn how to use Python packages and tools to make a LinkedIn profile scraper.

Pre-requisites 🔎

Make sure you have the following libraries and languages installed on your machine

Python

BeautifulSoup

Selenium

Chrome WebDriver

Beautiful Soup

Beautiful Soup is a Python library for pulling data out of HTML and XML files. It creates parse trees from the XML or HTML file for easy traversal and manipulation and allows the user to search the parse tree using a variety of filters and search queries to extract the desired data.

$ pip install beautifulsoup4

Selenium

Selenium is a popular open-source tool that is commonly used for automated testing of web applications. Selenium provides a way to interact with web pages through a web driver, which can simulate a user interacting with the page, such as clicking buttons, filling out forms, and navigating through pages.

$ pip install selenium

Chrome WebDriver

Chrome WebDriver is a separate executable that WebDriver clients can use to interact with the Chrome browser. It is a part of the Selenium project and is used for automating web applications for testing purposes.

P.S Find your browser's version on the settings page, then download the driver for the particular version you own.

Python

Python is a high-level, interpreted programming language that is widely used for a variety of tasks such as web development, data analysis, artificial intelligence, and scientific computing. Python is a cross-platform language, which means that it can run on multiple operating systems such as Windows, macOS, and Linux.

Implementation 🛠️

We can start putting our scraper into action to scrape profiles as soon as you have the essential libraries loaded into your machine.

// Importing modules

import time

from selenium import webdriver

from selenium.webdriver.common.keys import Keys

from selenium.webdriver.common.by import By

from selenium.webdriver.support import expected_condition as EC

from selenium.webdriver.support.ui import webDriverWait

from bs4 import BeautifulSoup as bs

# Specify the location of the installed Driver

PATH = "C:Program Files (x86)" chromedriver.exe'

driver = webdriver.Chrome(PATH)

# WebPage to be accessed

driver.get("https://www.linkedin.com/")

The task can be completed more rapidly by splitting the steps the bot will take. The breakdown consists of the following:

Authentication

Search

Accessing a profile URL

Getting useful information from the accessed profile and storing them inside a CSV file

1. Authentication

Create a function to handle the authentication aspect of the process

def authenticate():

try:

email_field = driver.find_element(By.XPATH, "//*[@id="session_key"]")

email_field.send_keys("User/email address")

// Program should sleep for 3 secs

time.sleep(3)

password_field = driver.find_element(By.XPATH, "//*[@id="session_password"]")

password_field.send_keys("Password")

time.sleep(3)

login_button = driver.find_element(By.XPATH, "//*[@id="main-content"]/section[1]/div/div/form/button")

login_button.click()

except:

print('An error occurred')

driver.quit()

authenticate()

The XPATH is used to access each field (email, username, and password) in the authentication function above. This can be obtained by "inspecting" the field you've specified using your browser's "inspect" menu.

Selenium by default gives us access to attributes that can be used to access particular HTML components. i.e.

By.CLASS_NAME

By.XPATH

By. ID

By.TAG_NAME

2. Search

The next step in the breakdown process is searching; you can query the role of jobs and then filter by people to get a list of profiles that have that role in their profile.

time.sleep(2)

def search():

try:

// Confirm the page is loaded before searching

WebDriverWait(driver, 10).until(

EC.presence_of_element_located((By.CLASS_NAME, 'authentication-outlet'))

)

search = driver.find_element(By.XPATH, '//*[@id="global-nav-typeahead"]/input')

// Search Input

search.send_keys('Software Developer')

// Search Input: ENTER

search.send_keys(Keys.RETURN)

WebDriverWait(driver, 10).until(

EC.presence_of_element_located((By.XPATH, '/html/body/div[6]/div[3]/div[2]/section/div/nav/div/ul/li[2]/button'))

)

people_btn = driver.find_element(By.XPATH, '/html/body/div[6]/div[3]/div[2]/section/div/nav/div/ul/li[2]/button')

people_btn.click()

except:

print("An error occurred")

driver.quit()

search()

The purpose of using time.sleep() is to simulate human time lag behavior; otherwise, the bot will execute all of its orders immediately, raising a flag that could result in your profile being blocked by Linkedin.

3. Accessing Profiles

Taking each profile URL and saving it in a list so that it may be retrieved

def profile():

try:

WebDriverWait(driver, 10).until(

EC.presence_of_element_located((By.CLASS_NAME, 'search-results-container'))

)

page_source = bs(driver.page_source, 'lxml')

profiles = page_source.find_all('a', class_ = 'app-aware-link')

all_profile = []

for profile in profiles:

profile_ID = profile.get('href')

if profile_ID not in all_profile:

all_profile.append(profile_ID)

return all_profile

except:

print('An error occurred')

driver.quit()

profile()

4. Getting useful information

Looping over each profile URL to retrieve certain attributes that will be included in the CSV file

// import the CSV module

import csv

def convert_into_csv():

try:

page_url = profile()

for urls in page_url:

driver.get(urls)

soup = bs(driver.page_source, 'lxml')

profiles = soup.find_all('div', class_ = 'ph0 pv2 artdeco-card mb2')

for profile in profiles:

name = profile.find('h1', class_ = 'text-heading-xlarge'). text

current_position = profile.find('div', class_ = 'text-body-medium').text

location = profile.find('div', class_ = 'pb2 pv-text-details__left-panel')[0].text

with open('output.csv', 'w', newline='') as file_output:

headers = ['Name', 'Postion', 'Location', 'URL']

writer = csv.DictWriter(file_output, delimiter=',', lineterminator='\n', fieldnames=headers)

writer.writeheader()

writer.writerow({headers[0]: name, headers[1]: current_position, headers[2]: location, headers[3]: profile})

except:

print('An error occurred)

driver.quit()

convert_into_csv()

Conclusion

The Selenium module provides a simple API for Selenium WebDriver function writing. The HTML can then be parsed using a different tool called Beautiful Soup. Both packages are dependable and useful companions for your web scraping endeavors.

In this article, you learned how to use Selenium and Beautiful Soup to scrape data from LinkedIn. You completed the entire web scraping procedure from beginning to end and created a script that retrieves and stores user profile data from LinkedIn. Feel free to explore the possibilities with this extensive pipeline in mind and these two strong libraries in your toolkit.

Note: It is worth noting that scraping data from a website without permission is a violation of the website's terms of service and can be illegal.